Yuval Noah Harari describes “algorithm” as one of the central concepts of our time. In the age of AI, algorithms determine information flows, visibility, and evaluation – and thus power. At the same time, the term itself has become the subject of intensive research: countless texts examine the meaning and origin of the word. Yet the dominant al-Ḫwārizmī narrative has still not been proven.

Algorithms as a trust problem

Today, “algorithm” no longer stands only for mathematics or computer science, but for a new question of trust:

- Many see algorithms as a symbol of objective rationality (“the AI knows better”).

- At the same time, distrust and fear are growing – of disinformation, hallucinations, and “fake truth.”

Algorithms also influence the internal confidence of AI systems – how strongly an AI “believes” in its own answer and how it evaluates criticism. This produces a double trust conflict:

- How far may humans trust AI?

- How far may AI trust itself (and its training data)?

The dominant narrative: al-Ḫwārizmī as namesake

If you ask today’s AI systems about the etymology, the standard answer is almost always: the term derives from the Arab scholar Muḥammad ibn Mūsā al-Ḫwārizmī. Dictionaries, specialist literature, and encyclopedias have long reproduced this derivation – and these very sources, in turn, form training data for modern AI. But beware: maximum consensus is not automatically maximum proof.

The trigger: an AI hallucination as a starting signal

The analysis emerges from a coincidence: the author stumbles, in a ChatGPT query, upon a plausible-sounding bibliographic reference – one that turns out to be completely false. It is a classic case of AI hallucination. Added to this are unconvincing claims by the AI about Fibonacci’s negative view of the algorithm. The AI visibly entangles itself in contradictions it cannot resolve, but instead continues to amplify.

This sets off a chain reaction:

- If even concrete source references are invented, how stable is the entire etymology narrative?

- Further research reveals additional inconsistencies, contradictory statements, and partly fabricated quotations from medieval and 19th-century sources.

- AI is simultaneously problem and tool: it hallucinates – but it can also help uncover contradictions in other people’s texts (including Wikipedia).

“Algorithmic untruth”: data vs. method

Different forms of potential “AI untruths” should be distinguished:

- correct data + wrong algorithms

- wrong data + correct algorithms

- wrong data + wrong algorithms

For the eponym problem, the second variant is especially relevant: if training data (despite being widely circulated) are questionable in content, an AI can still “process” them correctly – and in doing so may spread an error with high persuasive force.

The provocative result: consensus by circularity and citogenesis?

The sharpened core thesis is: the eponym (“algorithm comes from al-Ḫwārizmī”) partially solidified in a circular way in the 19th century and was stabilized through citogenesis (citations arising from citations) – comparable to a system that proves itself.

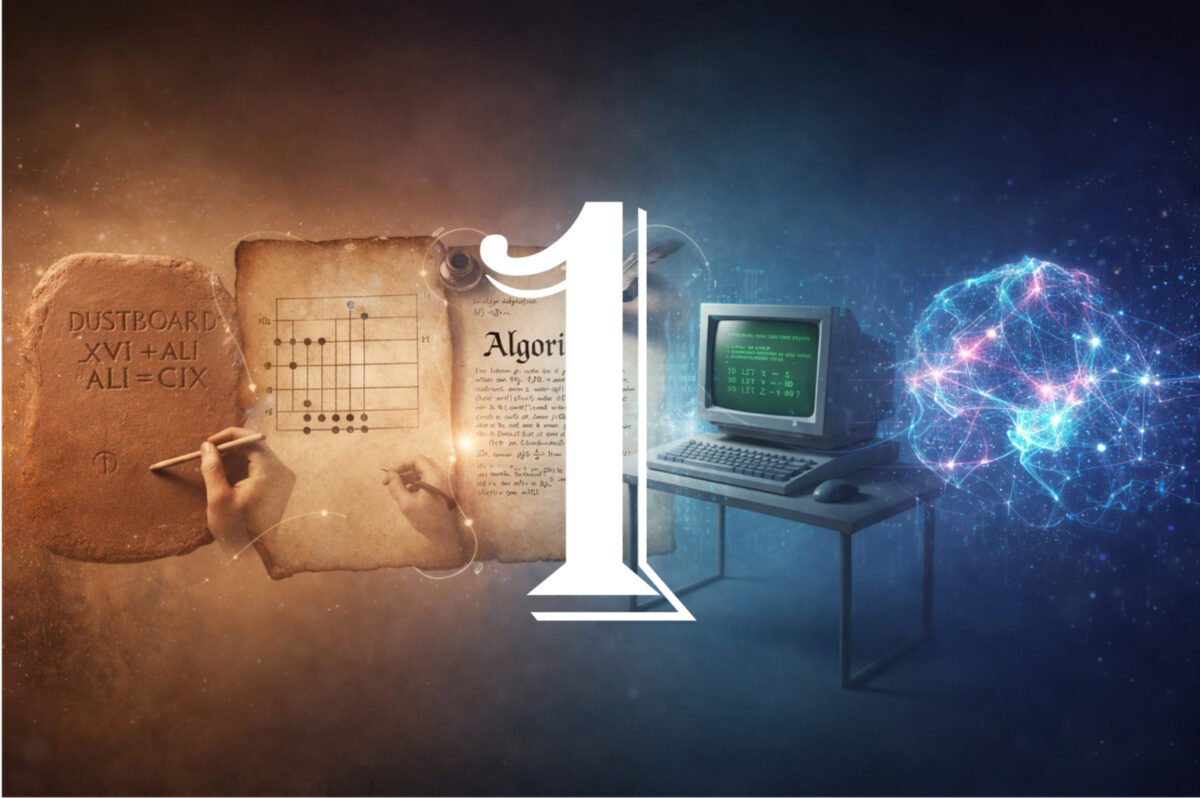

Three phases of the term’s “odyssey”

The development in a phase model:

- Throughout the Middle Ages up to the 19th century: a functional interpretation is advocated everywhere. In addition, there is no secure proof that al-Ḫwārizmī is the namesake.

- Mid/late 19th century: the al-Ḫwārizmī narrative develops – from speculation and asserted proofs – into “certainty,” but without hard evidence.

- From around 1870 to today: consolidation of the eponym through dictionaries, secondary literature, and later corrections/additions – up to today’s “seemingly proven” standard story.

The counterposition: the RAE and ḥisāb al-ġubār

Surprisingly, a further insight emerges. There is a little-known alternative: the Real Academia Española (RAE) traces the origin more to ḥisāb al-ġubār (roughly, calculation with Arabic numerals / sand reckoning) and additionally introduces “algobarismo” as a bridging term – though without an extensive chain of evidence. What if, in the end, the functional derivation can be supported better than the eponym?

Myth against myth: what do we learn from this?

In the end, this is not only about word history, but about a problem of the AI age:

- Humans build narratives that feel “true” through consensus.

- AI spreads narratives because they dominate training data – even if they are shaky.

But the combination of digital archives, search engines, and AI can also enable a kind of “deep truth”: making visible errors that were previously hidden in the fog of secondary citations.

Conclusion: etymology as a stress test for knowledge in the AI age

The origin of the word “algorithm” becomes a touchstone for how knowledge emerges, how it is canonized – and how quickly seemingly secure truths can take on a life of their own. More consensus does not automatically mean more evidence – and more AI does not automatically mean more truth.